|

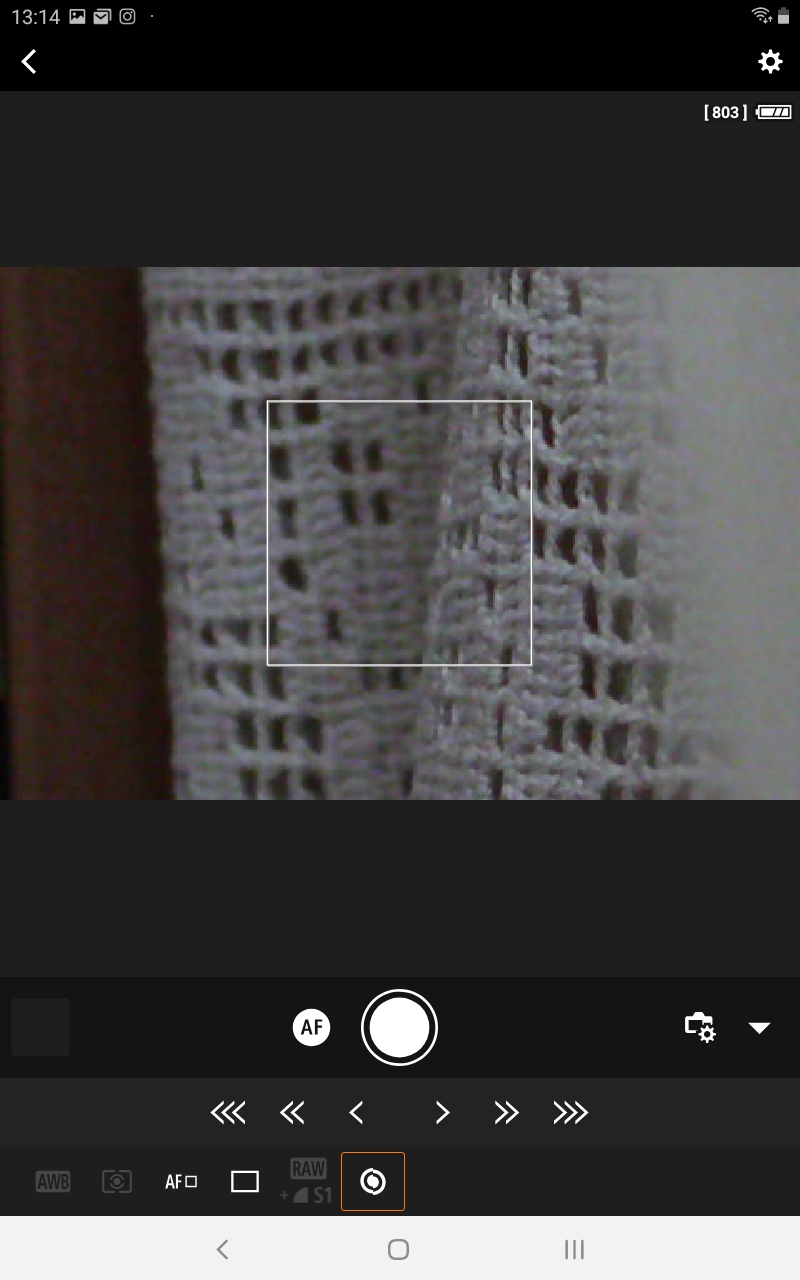

These must be combined into a single image at each time, and those images assembled into a video.Open the camera app. Cameras are set up by the captwo program to produce five images in uncompressed (CR2 format) at each time step, with exposure times of 1ms, 8ms, 66.6…ms, 500ms, and 4000ms. If you follow my approach, each time-lapse sequence will be based on at least a few thousand photos, each of which are about 4 megabytes in file size in Jpeg format.Although the ultimate goal is stereo time lapse, an interesting first step is to create video files from a single camera view. There are doubtless other means to create time-lapse videos, using video cameras for example, and surely these end up with smaller files than I create with my workflow.Here are six ways to reduce noise in your night photos. It’s caused by various things, including heat, increasing light sensitivity of the camera, insufficient information or other factors. Whether it’s random white, red or blue specks or grain, we all experience noise.

I set about doing this by defining the dynamic range of most interest to be that of the image with most information. However to get some initial results I simply wanted a useable low-dynamic range image at each time step, without too much concern about simulating consistent camera response. Software such as Adobe AfterEffects, Premiere, Apple Final Cut Pro.I found that after installing ImageMagick the following Python code snippet will successfully open a CR2, resize it to a size suitable for video (720×480), and save as PNG (lossless compression): from subprocess import callCall()Comprehensive techniques exist for combining multiple images into a high dynamic range image and to simulate different camera response curves (see ). I did begin to implement my own but it was taking too long, so took the (slightly ugly) shortcut of using the publicly available ImageMagick executable to convert to PNG first.Time-lapse is typically created by taking still images and using them as frames for. I searched for a CR2 loader written in Python and couldn’t find one. (Matlab may also have been good but I don’t have that installed at the moment).

But now there are hundreds of gigabytes of image files, all within the same folder. But if I connected a single cable from Mac to the NAS and set fixed IP’s on both then all works nicely, as follows: 0.00-1.00 sec 22.0 MBytes 184 Mbits/sec 1.00-2.00 sec 21.6 MBytes 181 Mbits/sec 2.00-3.00 sec 21.6 MBytes 182 Mbits/sec 3.00-4.00 sec 21.1 MBytes 176 Mbits/sec 4.00-5.00 sec 21.8 MBytes 183 Mbits/sec 5.00-6.00 sec 21.8 MBytes 182 Mbits/sec 6.00-7.01 sec 21.6 MBytes 181 Mbits/sec 7.01-8.00 sec 21.6 MBytes 182 Mbits/sec 8.00-9.00 sec 21.8 MBytes 182 Mbits/sec 9.00-10.00 sec 21.6 MBytes 182 Mbits/sec 0.00-10.00 sec 218 MBytes 182 Mbits/sec receiver 0.00-10.00 sec 216 MBytes 182 Mbits/sec receiverNow to copy the images and make some interesting time lapse sequences…It wasn’t too difficult to look at images from the NAS box when there were only a few days worth of data, as I was using the public folder which is automatically shared on the network using samba. Now just need to find out what that might be……and in the end it turned out to be something to do with cabling or my network switch – still haven’t figured out which. 0.00-3.36 sec 384 KBytes 937 Kbits/sec 3.36-3.36 sec 0.00 Bytes 0.00 bits/sec 3.36-7.69 sec 256 KBytes 484 Kbits/sec 7.69-7.69 sec 0.00 Bytes 0.00 bits/sec 7.69-9.37 sec 256 KBytes 1.25 Mbits/sec 9.37-9.37 sec 0.00 Bytes 0.00 bits/sec 9.37-11.05 sec 256 KBytes 1.25 Mbits/sec 0.00-11.05 sec 1.25 MBytes 949 Kbits/sec receiver 0.00-11.05 sec 1.12 MBytes 854 Kbits/sec receiverSo there’s something seriously wrong with the data rates obtained when the TS-109 is asked to send data to the Mac. The roles can be reversed using the -R parameter: src/iperf3 -4R -c my_ts109_nameWhich gives disappointing and revealing results. We really want to simulate data coming the other way. Is adobe after effects for mac or pcAlso, any attempts to use rsync to update with only the newly taken images (i.e. The z option means to compress on the box before sending, though this didn’t make much difference in speed, and I’m guessing this is because what was gained in network bandwidth was lost in compression time.But it does seem like this does seem overly slow for the amount of data – after all there must be considerable overhead in using the ssh transport layer. I was able to do an initial download after a few months of images by using rsync with the following command typed into an X11 terminal on a Mac: rsync -avz -e ssh /Users/OneYearTimeLapse/Data/I left the command running overnight to do this. Program For Doing Time Lapse Photography On A Mac OSX File SystemThe network light on the box is busily flashing so hopefully it will indeed build a nice file list and download everything swiftly…let’s see how long it all takes…It took about18 hours of coding and testing to choose an appropriate software implementation to support hardware sync. And that was ten minutes ago. And all worked smoothly – I can access the box via the Mac OSX file system as /Volumes/nasbox Note that this means the NFS server must already have been running on the TS-109 NAS box – I didn’t have to take any steps to set that up, only to configure access from the Mac.So I had a go at an rsync with no need to use ssh this time: rsync -av -ignore-existing /Volumes/nasbox/images/live/ /Users/OneYearTimeLapse/Data/Immediately it said building file list. So I followed instructions at. Keeping the delay between multiple exposures as low as possible is desirable so that not too much movement occurs in the scene (people, traffic, trees etc) and so multiple exposures can be combined without ghosting effects.In the end the best I could do using the Canon 350D’s was 2.

0 Comments

Leave a Reply. |

AuthorHeidi ArchivesCategories |

RSS Feed

RSS Feed